Brief History of Virtualization

Virtualization is all around us these days. In the world full of clouds, hypervisors, remote desktops and many, many more “virtual” stuff, it’s very important to keep in mind how it all started. Even though it might seem like virtualization picked up only mere few years ago, it was actually around for over 50 years now! Granted, that ancient technology was nearly completely different from what we have now, but isn’t that the case with every branch of IT? Get yourself a nice cup of coffee and find a comfortable chair to sit on – this post will be one of the bigger ones.

IBM Virtual Prehistory⌗

Virtualization’s roots go back to the 1960s and IBM, where programmer Jim Rymarczyk was deeply involved in the first mainframe virtualization effort. In July 1, 1963 when Massachusetts Institute of Technology (MIT) announced Project MAC (Mathematics and Computation, later renamed to Multiple Access Computer). This project was funded by a $2 Million grant from DARPA to fund research into computers, specifically in the areas of Operating Systems, Artificial Intelligence, and Computational Theory. As part of this research grant, MIT needed new computer hardware capable of more than one simultaneous user and sought proposals from various computer vendors including GE and IBM.

At this time, IBM was not willing to make a commitment towards a time sharing computer because they did not feel there was a big enough demand, and MIT did not want to have to use a specially modified system. GE on the other hand, was willing to make a commitment towards a time-sharing computer. For this reason MIT chose GE as their vendor of choice. The loss of this opportunity was a bit of a wake-up call for IBM who then started to take notice as to the demand for such a system. Especially when IBM heard of Bell Labs’ need for a similar system.

Jim Rymarczyk – a retired IBM Fellow (1996), he was with IBM for over 43 years, from May 1968 to January 2012. He joined IBM as a programmer in the 1960s just as the mainframe giant was inventing virtualization. While he didn’t invent virtualization, he has played a key role in advancing the technology over the past four decades. When he joined IBM on a full-time basis he was working on an experimental time-sharing system, a separate project that was phased out in favor of the CP-67 code base which he said, “was more flexible and efficient in terms of deploying VMs for all kinds of development scenarios, and for consolidating physical hardware.

In response to the need from MIT and Bell Labs, IBM designed the CP-40 main frame. The CP-40 was never sold to customers, and was only used in labs. However, it is still important since the CP-40 later evolved into the CP-67 system.

According to a NetworkWorld article, IBM’s CP-67 software used partitioning technology to allow many applications to be run at once on a mainframe computer. While its impact was substantial for mainframe users, it took years before a direct descendant of IBM’s work came back to life on X86 platforms. Through the use of Unix, and C compilers, and adept user could run just about any program on any platform, but it still required users to compile all the software on the platform they wished to run on. For true portability of software, you needed some sort of software virtualization.

In 1990, Sun Microsystems began a project known as “Stealth”. Stealth was a project run by Engineers who had become frustrated with Sun’s use of C/C++ API’s and felt there was a better way to write and run applications. Over the next several years the project was renamed several times, including names such as Oak, Web Runner, and finally in 1995, the project was renamed to Java.

In 1994 Java was targeted towards the Worldwide web since Sun saw this as a major growth opportunity. The Internet is a large network of computers running on different operating systems and at the time had no way of running rich applications universally, Java was the answer to this problem. In January 1996. the Java Development Kit (JDK) was released, allowing developers to write applications for the Java Platform.

At the time, there was no other language like Java. Java allowed you to write an application once, then run the application on any computer with the Java Run-time Environment (JRE) installed. The JRE was and still is a free application you can download from then Sun Micro-systems website, now Oracle’s website.

Java works by compiling the application into something known as Java Byte Code. Java Byte Code is an intermediate language that can only be read by the JRE. Java uses a concept known as Just in Time compilation (JIT). At the time you write your program, your Java code is not compiled. Instead, it is converted into Java Byte Code, until just before the program is executed. This is similar to the way Unix revolutionized Operating systems through it’s use of the C programming language. Since the JRE compiles the software just before running, the developer does not need to worry about what operating system or hardware platform the end user will run the application on; and the user does not need to know how to compile a program, that is handled by the JRE..

The JRE is composed of many components, most important of which is the Java Virtual Machine. . Whenever a java application is run, it is run inside of the Java Virtual Machine. You can think of the Java Virtual Machine is a very small operating system, created with the sole purpose of running your Java application. Since Sun/Oracle goes through the trouble of porting the Java Virtual Machine to run on various systems from your cellular phone to the servers in your Data-center, you don’t have to. You can write the application once, and run anywhere. At least that is the idea; there are some limitations.

With increased complexity came expanding administrative costs driven by the need to hire more experienced IT professionals, and the need to carry out a wide variety of tasks, including Windows Server backup and recovery. These actions required more manual intervention in processes than many IT budgets could support. Server maintenance costs, especially those tied to Windows Server backup, were climbing and more personnel were required to work through an increasing number of day-to-day tasks. Just as important were the issues of how to limit the impact of server outages, improve business continuity and create more robust disaster recovery plans.

VMware Conquest of Virtualization⌗

The existence of virtualization as a concept went largely unremarked for the nearly two decades of the rise of client/server on x86 platforms. Still, it served as a powerful inspiration for VMware to reviving the concept and apply it to x86 machines. In the late 1990s, VMware stepped in and began to apply its own virtualization model. These included low x86-platform server utilization, where perhaps 10-15% of server capacity was used, and rising costs associated with electrical power use, cooling and a fast-growing server and storage footprint.

In 1999, VMware introduced virtualization to x86 systems, which VMware points out were not designed for virtualization in the way mainframes were. The problem centered around nearly 20 instructions that could cause application termination or system crash when they were virtualized. VMware addressed this with what it called an adaptive virtualization routine that contained the instructions as they’re generated and allows other instructions to be passed through without intervention.

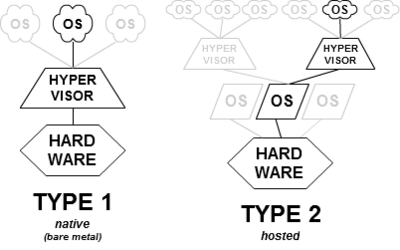

I mention VMWare because they are really the market leader in Virtualization in today’s market. In 2001, VMWare released two new products as they branched into the enterprise market, ESX Server and GSX Server. GSX Server allowed users to run virtual machines on top of an existing operating system, such as Microsoft Windows, this is known as a Type-2 Hypervisor. ESX Server (later ESXi) is known as a Type-1 Hypervisor, and does not require a host operating system to run Virtual Machines.

A Type-1 Hypervisor is much more efficient than a Type-2 hypervisor since it can be better optimized for virtualization, and does not require all the resources it takes to run a traditional operating system.

Since releasing ESX Server in 2001, VMWare has seen exponential growth in the enterprise market; and has added many complimentary products to enhance ESX Server. With this breakthrough they were first on the market with a product that slowly began to attract attention, then accelerated to the point where by 2008, a significant percentage of companies had begun to virtualize a small portion of their not-business-critical applications, and they began carrying out Windows Server backup on their new virtual machines.

The Future is Virtual⌗

By the late 2000s, VMware had attracted quite a bit of attention and they were joined by several other vendors offering virtualization solutions, some with better Windows Server backup solutions than others, though VMware continues to hold steady with nearly a three-quarter share of the hypervisor market. Only Microsoft’s Hyper-V hypervisor has garnered a significant percentage of the rest of the market, followed by Citrix’s XenServer. Still, the potential for the market is so big that the competition will continue, augmented in part with a fast-rising investment in the sector by companies like Amazon, Microsoft, MSPs and longtime IT industry players who offer virtualization as part of cloud and offsite computing services.

Summary⌗

Computer Virtualization has a long history, spanning nearly half a century. It can be used for making your applications easier to access remotely, allowing your applications to run on more systems than originally intended, improving stability, and more efficient use of resources. Some technologies can be traced back to the 60’s such as Virtual Desktops, others can only be traced back a few years, such as virtualized applications.

I hope you’ve enjoyed this post and I hope I’ll get some feedback from my loyal readers as this format is something I’ve never tried before. If you’d like to see more history lessons such as this, give me a like, share this article out or send me an email. That would be all – Wilk’s out.